Join Clinicians Worldwide

Evidence-based insights to enhance hearing care—twice a month

Subscribe Now

Evidence-based insights to enhance hearing care—twice a month

Subscribe NowMED-EL

Published May 11, 2022

How can I know whether a child hears a sound well enough to identify it? Which speech sounds do children typically master earliest following implantation? Is it ‘normal’ for a three-year-old to drop the final consonants in words?

The Building Blocks of Speech is a friendly ready-reference tool that can help answer questions like these with evidence-based literature from the fields of speech acoustics and speech development. In this post, we’ll share a brief tutorial on how to use Building Blocks of Speech to analyze the auditory discrimination, articulation, and phonology skills of children with hearing loss.

Speech is a complex acoustic signal; and auditorily, one feature we rely on to recognize individual phonemes is the resonant formant profile. If a formant could be compared to playing an individual key on the piano, then a speech sound would resemble playing a chord, with each formant representing a single note.

Actually, a speech formant is not a pure tone at all but rather a band of acoustic energy that reflects a peak in resonance. In other words, the speech formant represents a frequency range where the resonance of air in the vocal tract is higher or amplified.

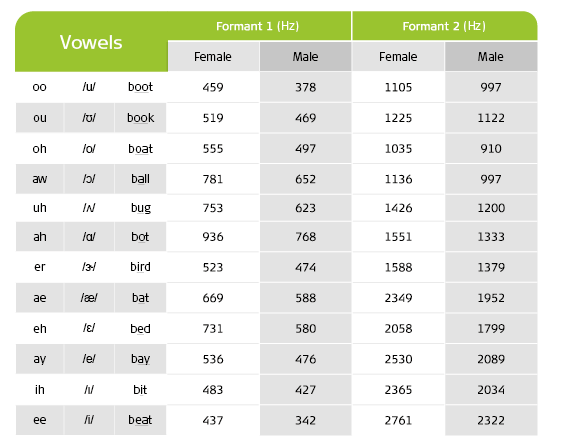

Table 1: Average formant values of English vowels for female and male voices

The first and second formants, F1 and F2 respectively, are helpful with identifying vowels. Table 1 displays the average formant values for 12 standard English vowels. To detect a speech sound, we must hear at least one formant; to identify a speech sound, we must distinguish multiple formants.

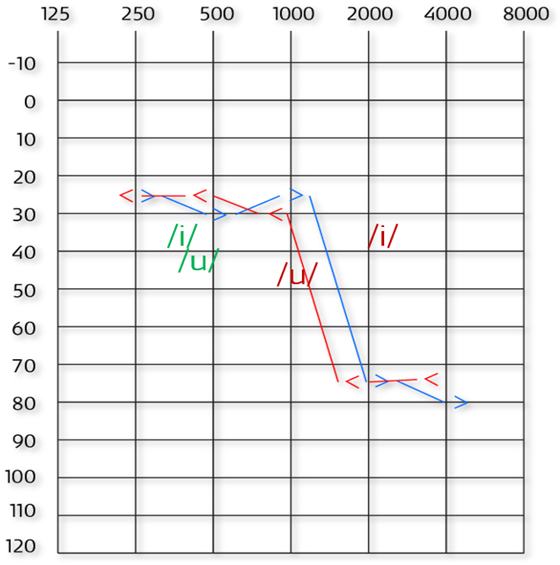

To determine whether an individual has sufficient auditory access to recognize a vowel sound, their most recent audiogram (e.g., aided or implant thresholds, if wearing hearing technology) is compared with the formant information which describes a particular vowel. The audiogram in Figure 1 displays the unaided thresholds for both ears as well as the first and second formants for vowels /u/ and /i/.

Figure 1: Example audiogram—Behavioral bone conduction thresholds for left (<) and right (>) ears. Formants for /u/ and /i/ in green (F1) and red (F2).

This audiogram would suggest that an individual could detect F1 for both /u/ and /i/ using that acoustic information alone. They might also be able to identify /u/ given that its F2 should also be audible in quiet. However, without improved auditory thresholds for sounds above 1000 Hz, it unlikely that they would identify /i/, even in a quiet setting, because they cannot hear its F2. They may misidentify this sound, confusing it with others that share similar first formants like /u/.

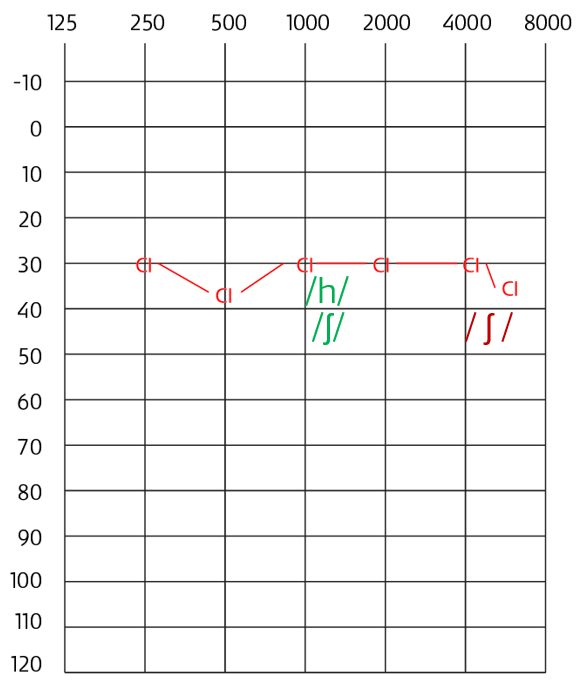

Consonant analysis can occur in much the same way. For example, if a child substitutes /h/ for /ʃ/ (‘sh’) during the Ling 6 Sound Test, could this error be the result of a misperception?

Figure 2: Example audiogram—Behavioral CI thresholds. Formants for /h/ and /ʃ/ in green and red.

Imagine the audiogram shown in Figure 2 reflects their most recent audiogram. Here the formant values of both sounds are displayed and imply that in a quiet setting the individual would have access to both sounds. If further assessment supports this indication, then we might conclude that this error is related to their speech production skills rather their speech perception ability and investigate that further.

It is important to note that auditory thresholds describe whether an individual can detect a sound at a specific frequency 50% of the time, usually in a quiet sound-treated setting. When comparing audiograms to formants, keep in mind that any impressions which could be gathered from formant analysis should be validated by actual performance in both a therapeutic setting as well with audiometric speech perception testing.

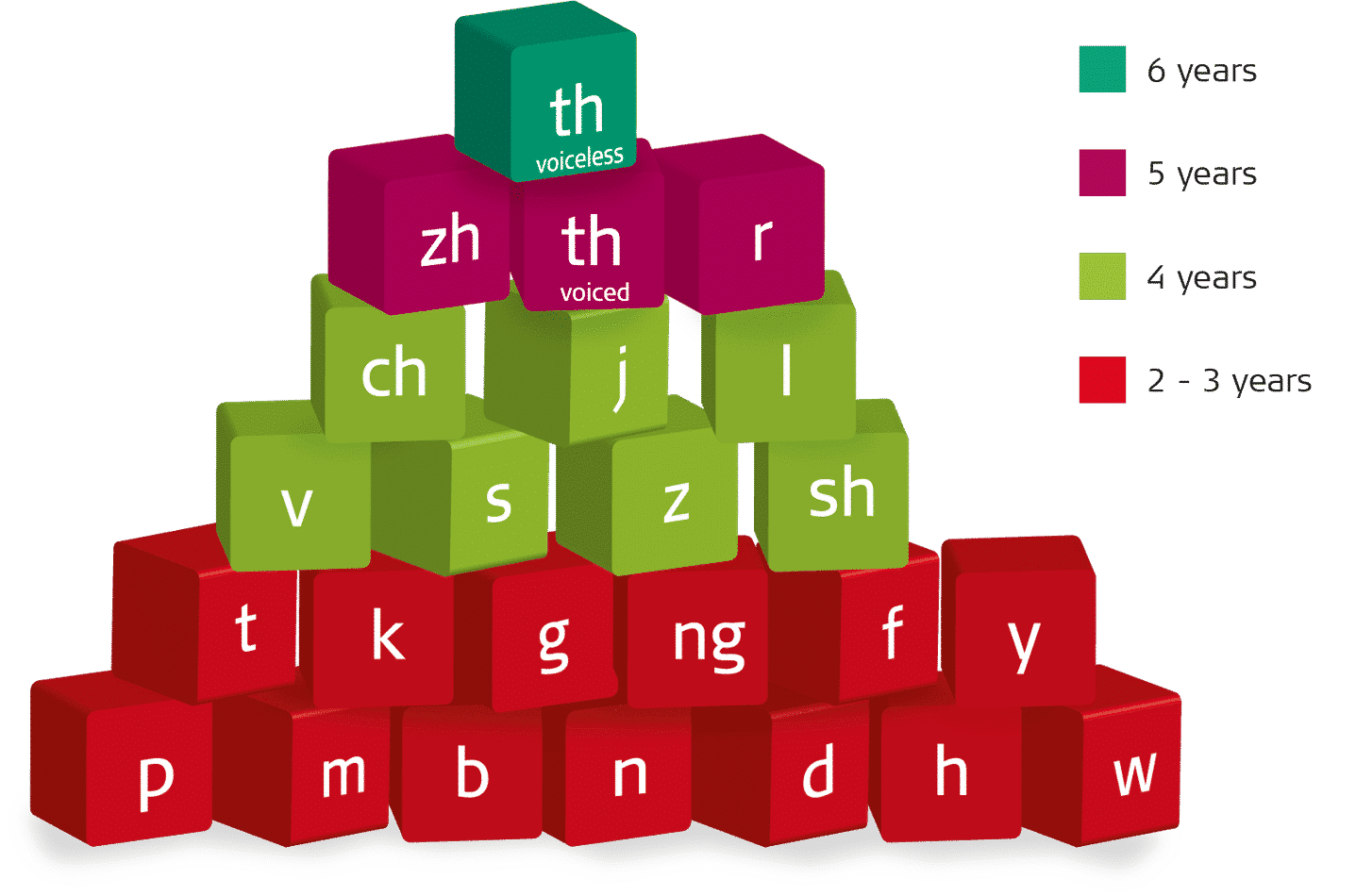

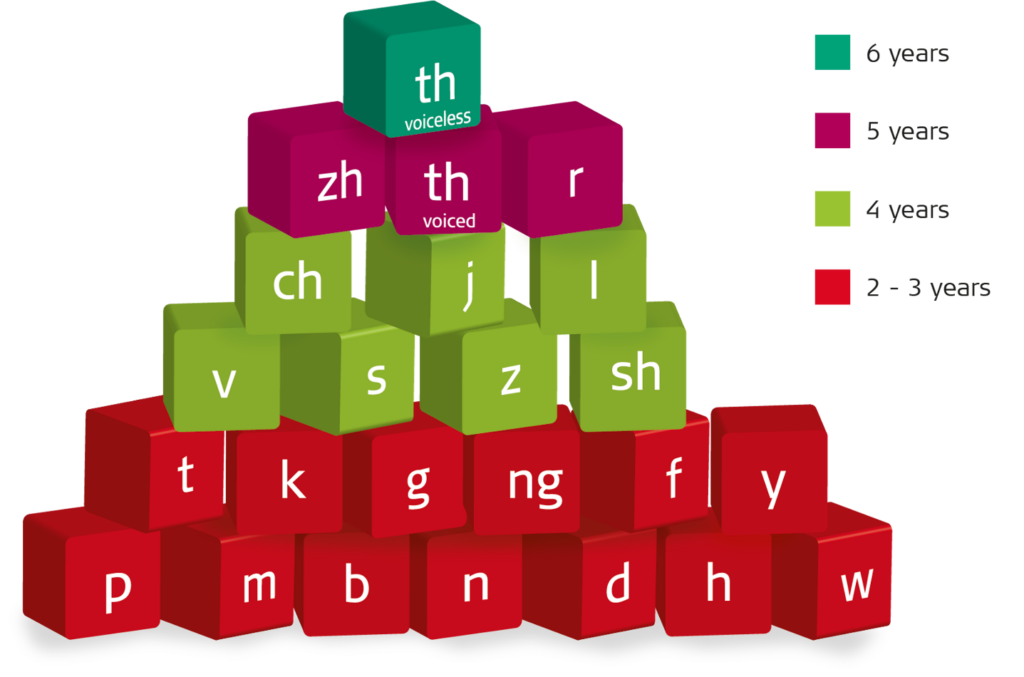

Figure 3: Acquisition of English consonants from birth to six years. The color of each block signifies the age when approximately 90% of typically developing children have acquired the sound shown

Across major language groups, most children can accurately produce the phonemes of their native tongue by 5 years of age.[3] Figure 3 illustrates the age of acquisition for English consonants between the ages of birth to six years. When assessing progress, the time during which a child has had access to spoken language should be considered.

For those with typical hearing, this is represented by their actual age; for those who rely on hearing technology, it will depend on the duration and quality of their device use. For instance, a four-year-old child with typical hearing might be expected to produce the phoneme /z/ accurately in speech; however, a child of the same age who has been listening with a cochlear implant for just 6 months would not.

Early childhood talk is characterized by developmental speech patterns–more commonly known as phonological processes–where young speakers may, for example, repeat sounds or syllables in words (e.g., baba for bottle), drop sounds from word endings (e.g., ‘cu’ for ‘cup’), or replace sounds with others (e.g., tun for sun). These patterns fade from speech over time as children mature.

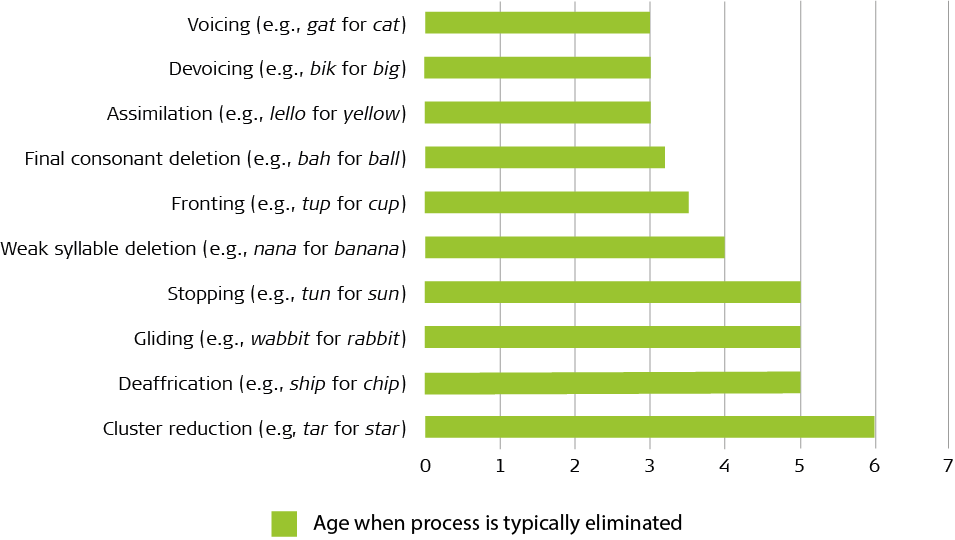

Figure 4: Developmental phonological patterns commonly observed in children with hearing loss

The chart in Figure 4 displays the phonological patterns observed in typically developing children that are most persistent among children with hearing loss. It also indicates the approximate ages when they are eliminated from typically developing speech.

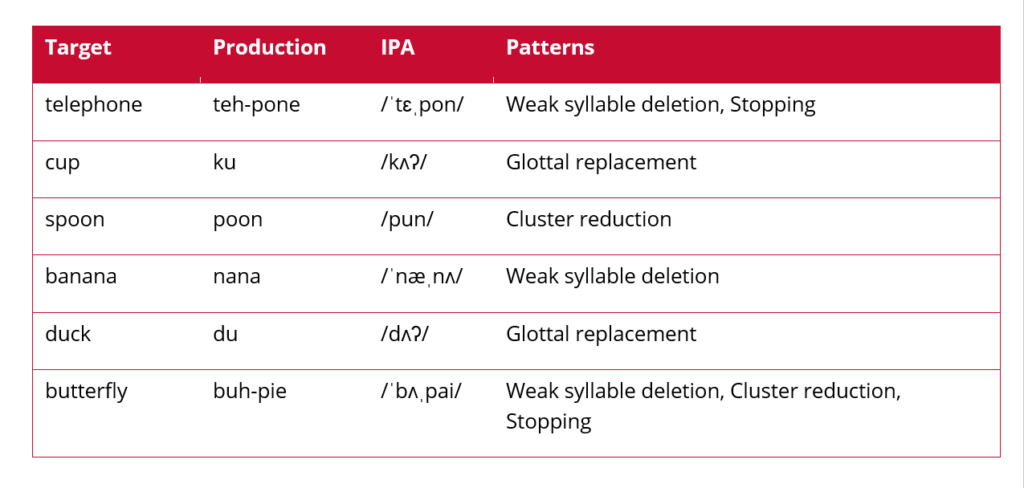

Table 2: A partial speech sample revealing weak syllable deletion, stopping, and cluster reduction.

When analyzing developing speech, it is helpful to identify these patterns so that priorities for intervention may be set. Consider the short speech sample (Table 2) taken during a therapy session with a four-year-old cochlear implant recipient who has worn their device consistently since its activation 2.5 years ago. Several typical processes, such as weak syllable deletion, stopping, and cluster reduction, are still present in the child’s speech. They also use glottal replacement, which is an atypical, or non-developmental, speech pattern that typically developing children do not generally use at any stage.

From a cursory analysis of this sample, several recommendations could be made. First, a larger speech sample of at least 75 tokens is advised to note any other phonological patterns in their speech and to confirm that the child already has a repertoire of early developing vowels and consonants appropriate to their hearing.[4]

We would then want to establish that the child has auditory access to all sounds produced in error. This could be completed using the formant analysis described above. Should our larger sample provide no additional findings, we would then address the phonological processes that are the most immature and have the greatest impact on overall intelligibility.

In the example above, our four-year-old is relatively advanced given a hearing age of 2.5 years although their speech is still delayed compared to typical peers. Majority of four-year old English speakers do not omit unstressed syllables from words or use glottal substitution. These speech behaviors have the greatest impact on speech intelligibility and could be selected for immediate intervention.

Acoustic and developmental speech information is undoubtedly useful for guiding clinical decisions for children with hearing loss. The Building Blocks of Speech combines all the reference material presented here, including vowel and consonants formant tables along with charts for sound acquisition and phonological development.

The Building Blocks of Speech does not replace the need for formal standard assessments conducted in audiology or therapy clinics. Instead, it can alert interventionists to the need for further evaluation. It’s a clinical tool that aural habilitation or hearing implant professionals can reach for whenever they need to quickly analyze the emerging speech perception and production skills of the children with hearing loss that they serve.

The Building Blocks of Speech poster is available as a free download in English from MED-EL Rehabilitation.

For more on childhood language development, check out our blog article introducing the tool, A Child’s Journey Developmental Milestones.

If you’re interested in more free and readily available MED-EL resources that rehabilitation professionals can use, Aneesha Pretto offers an overview of our materials to assess and monitor the progress of adolescent and adult hearing implant recipients in our recent podcast series.

Aneesha also reviews some key considerations for counselling and developing a rehabilitation program for hearing implant recipients with SSD.

All that and more can be found at: https://medel.libsyn.com/category/Rehabilitation

Special thanks to Aneesha Pretto, Advanced Rehabilitation Manager at MED-EL, for writing and contributing this article.

MED-EL

Was this article helpful?

Thanks for your feedback.

Sign up for newsletter below for more.

Thanks for your feedback.

Please leave your message below.

CTA Form Success Message

Send us a message

Field is required

John Doe

Field is required

name@mail.com

Field is required

What do you think?

The content on this website is for general informational purposes only and should not be taken as medical advice. Please contact your doctor or hearing specialist to learn what type of hearing solution is suitable for your specific needs. Not all products, features, or indications shown are approved in all countries.

MED-EL

Get the latest research and resources to help people with every kind of hearing loss. Subscribe to the MED-EL Professionals Blog now.

Registration was successful

We’re the world’s leading hearing implant company, on a mission to help people with hearing loss experience the joy of sound.

Find your local MED-EL team

The content on this website is for general informational purposes only and should not be taken as medical advice. Please contact your doctor or hearing specialist to learn what type of hearing solution is suitable for your specific needs. Not all products, features, or indications shown are approved in all countries.